Notiondipity: What I learned about browser extension development

Posted 3 weeks ago by Milos Svana

Me and many of my colleagues at profiq use Notion for note-taking and work organization. Our workspaces contain a lot of knowledge about our work, plans, or the articles or books we read. At some point, a thought came to my mind: couldn’t we use all this knowledge to come up with project ideas suited to our skills and interests?

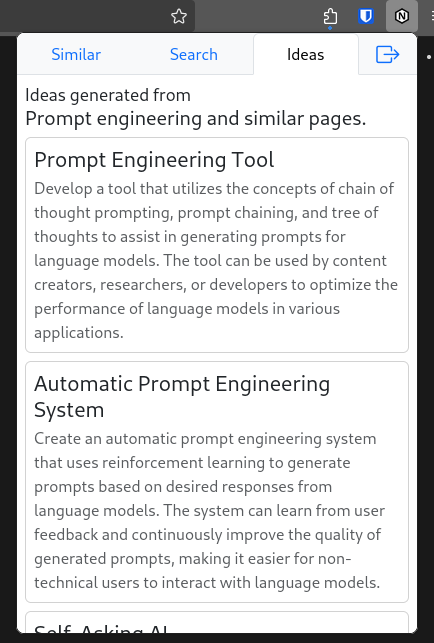

And so Notiondipity was born. This browser extension uses large language models to analyze your Notion workspace and generate project ideas. It currently provides three basic features:

- recommending pages similar to the currently open page,

- recommending pages similar to a user query,

- generating project ideas from the contents of the currently open page and pages most similar to it.

Here is what it looks like:

In this article, I want to tell you a bit about my experience of developing a browser extension. This is the first time I created one. I had to learn a lot of new stuff, and, most importantly, solve a lot of unexpected issues. But let’s start with a bit of context.

The platform

Unsurprisingly, the platform we are working with when developing a browser extension is the browser. But things are more complicated than that. There is no unified browser platform. Although Chromium-based browsers currently dominate the market, there are still millions of Firefox and Safari users. People have put a lot of effort into unifying extension development across browsers, but there are still many differences. Some APIs are supported only by certain browsers, others have a different interface in each browser. Some settings and features are available only in certain browsers. An extension developer has to deal with all of this.

After you successfully create an extension for multiple browsers, you encounter a second issue: distribution. These days extension stores such as Chrome Web Store or Firefox ADD-ONS are the go-to solution. But they come with their own set of issues. Each store can have different policies and technical requirements. For example, if you use code minification, Firefox requires you to submit the original version of your code. Chrome requires you to add a special publisher key to your extension configuration which makes validation fail in other browsers. Firefox allows you to publish your extension in an experimental mode with less strict requirements, but Chrome has no such option. The whole landscape is a mess.

The basics

Browser extensions can be created using standard web technologies: HTML, CSS, and JavaScript. If you want you can even use TypeScript and build systems like Vite. A crucial difference between normal web pages and extensions is access to advanced APIs such as tabs, browsing history, or downloads.

The starting point of each extension is a manifest.json file. Its main job is to define the extension points so the browser knows what to do and to provide additional metadata such as required permissions, current version, or the path to the extension icon.

The main extension point utilized by Notiondipity is a popup. A popup is what opens when you click the extension icon usually located in the top right corner of your browser. It’s just a website enclosed in a special container. You can use any modern framework to build the popup UI. Notiondipity uses Vue and Bootstrap. You can add a popup by defining an action in the manifest.json file:

"action": {

"default_title": "Notiondipity",

"default_popup": "index.html",

"default_icon": {

"48": "icon_48.png",

"128": "icon_128.png"

}

}

I thought that the popup would be everything I needed to build Notiondipity. As it turned out, things are not that simple. So let’s have a look at some of the issues I encountered and how I solved them.

Issue 1: A popup can’t do everything

I said that browser extensions have access to several advanced APIs. Notiondipity requires two such APIs: the Tabs API and access to the current page’s DOM. I quickly figured out that I couldn’t use these APIs directly from the popup. I can use the Tabs API only from a background worker and access the DOM only from a content script.

As the name suggests, a background worker is responsible for running tasks in the background. But more importantly for us, it is the only part of the extension that can access the Tabs API and other browser-wide features. So it is exactly the place where I needed to put a function for checking whether the current tab contains a Notion page and extracting the page ID.

Content scripts are sort of similar to regular website JavaScript. They can access and manipulate the DOM of a specific website instance. Notiondipity needs this access to extract the text of the current page.

So we have a popup, a background worker, and a content script. The structure of the extension is suddenly more complex. And we also have a new problem. We need these three parts to talk to each other — to provide good recommendations, the popup needs to know the current page ID as well as its contents. Fortunately, all three components have access to extension-wide event listener API, allowing them to send and respond to messages.

Let’s have a look at how to get the page contents from the content script to the popup. The content script creates a new listener expecting a certain message type. If a correct message is received, we access the DOM, extract the contents of the page, and send it back as a response. Note that we are using the chrome global variable which works in Firefox too.

chrome.runtime.onMessage.addListener(function (message: Message, _, sendResponse) {

if (message.type == MessageType.GET_PAGE_CONTENTS) {

const pageContentsElement = document.querySelector('.notion-page-content') as HTMLElement

const pageContents = pageContentsElement?.innerText

sendResponse(pageContents)

}

})

We can then send a message from the background worker to get the page contents (we can’t send the message directly from the Popup, because we need the Tabs API to get the current tab):

const pageContent = await chrome.tabs.sendMessage(

currentTab[0].id, {type: MessageType.GET_PAGE_CONTENTS})

Issue 2: Different manifest.json for each browser

I target two browsers with Notiondipity: Chrome and Firefox. As already discussed, there are some differences between these two browsers from the extension development standpoint. Many of these differences can be found in the manifest.json file. For example, in Chrome you define background workers like this:

"background": {

"service_worker": "worker.js"

}

But in Firefox, you have to write this:

"background": {

"scripts": [

"worker.js"

],

}

Many such differences are mutually incompatible. If you include properties that Firefox or Chrome don’t expect, chances are that the browser starts screaming at you when you try to load the exception.

So we somehow have to maintain multiple versions of the manifest file. How can we do this efficiently? I am already using Vite to build Notiondipity. And as it turns out, someone already had the genius idea of creating a Vite plugin for browser extension development.

The extension can do two very cool things: it can add special “tags” to the manifest file to make certain settings browser-specific, and it can process references to typescript files. Using these two features, we can rewrite our background script definition:

"background": {

"{{firefox}}.scripts": [

"src/worker.ts"

],

"{{chrome}}.service_worker": "src/worker.ts"

},

Thanks to the plugin, Vite can then build a final extension for the target browser including the manifest file, and put it into the ./dist directory. We can select the browser in the vite.config.ts:

export default defineConfig({

plugins: [

vue(),

webExtension({

browser: process.env.TARGET || 'chrome',

scriptViteConfig: {mode: process.env.MODE, build: {minify: false}},

htmlViteConfig: {mode: process.env.MODE, build: {minify: false}}

}),

]

})

This specific configuration allows me to select the target browser using the TARGET environment variable, which allows me to quickly switch between browsers without modifying the configuration file.

Issue 3: Can’t talk to Notion directly

This issue is more project-specific. Notiondipity needs access to your Notion workspace and it uses the official Notion API to get it. The original plan was that the whole extension would live in the browser. There would be no backend component. This would enhance user privacy and simplify development.

However I quickly encountered an issue with this approach: Notion API does not respond with proper CORS headers, so it can’t be used directly from a browser. After trying different options, the only viable path was to develop a backend that would act as a proxy for the Notion API.

Development simplicity was gone. However since I already had to create the backend component, I decided to extend it with more features. For example, it employs a lot of caching to make things fast and to save some money on Open AI API calls. A new feature currently under development is saving ideas to a personal list of “good” ideas worth further thought.

Having a backend creates another challenge: updates. Sometimes you would like to introduce backwards incompatible changes to your backend’s API. If you do this, you also have to update the code accessing the affected parts of the API in the extension itself. Just like a mobile or a desktop app, extensions have a different update cycle than the backend. You are in full control of the backend and can deploy new versions whenever you want. However, updating the extension itself is a lengthy process. There is a delay between submitting A new version to an extension store and its publication. And there is an even worse delay between the publication of a new version and users downloading this new version. Users update their extensions at different times and some don’t update at all. As a result, people are using multiple versions of your extension at the same time.

I haven’t fully solved this issue yet. I have a few ideas though: first, I could run multiple versions of the backend at the same time and use the appropriate backend in each new version of the extension. The second idea is to simply tell the user to update the extension if they want to continue using it. Both solutions can be combined. I can maintain 2 or 3 most recent versions of the backend. This should give me enough time to publish and distribute new extension versions. At the same time, I can let the users know that an update would be nice and prevent them from using an extension version that is too old. Another approach that we use in other projects and that is also worth considering here is path-based versioning. I could add a version prefix to each API endpoint path, for example,/v1/ideas/.

Issue 4: Automated testing

The last issue I want to talk about is automated UI testing. At some point, I thought it might be a good idea to write a few UI tests for the extension popup with Cypress or Playwright. In many cases, this wouldn’t be an issue, as you can open the popup in a standard browser tab using a special URL.

I’ve studied browser APIs in detail but didn’t find anything that would allow me to work with popups directly. So there are two paths I could take. First, I could incorporate the extension UI directly into the Notion page. It would be accessible via a standard tab and easily testable. But implementing this approach means a lot of work.

The second approach is based on the ability of browsers to open a popup in a tab by visiting a special URL. But this would change the currently active tab, from which Notiondipity extracts the Notion page ID and contents to provide relevant recommendations. I would have to introduce a few mocks that would replace the use of Tabs API with a static page ID and contents. This seems much more reasonable than rebuilding the whole UI.

Expect the unexpected

You know the story. You have a great project idea you want to implement. As an experienced developer, you can create a perfect vision of how everything will be implemented. You think things will be easy. You start the work. But soon you discover an unexpected issue. And another one. And now the third. This slows you down. Hours become days and days become weeks.

Browser extensions are no different. In this article, I discussed several such unexpected issues and offered solutions to at least some of them. I hope my experience helps you in at least two ways: by giving you solutions if you encounter similar issues, and by helping you realize that you always need to expect the unexpected; especially when you feel confident.

But I also think that there is a silver lining to all these difficulties: they force you to learn something new. If you want your project to work, you need to solve all the difficult issues you encounter. To solve these issues you have to learn about new technologies or aspects of technologies you already know that are alien to you. And this makes you a better engineer.